Introduction

Artificial intelligence (AI) has ushered in unprecedented advancements in digital content creation, enabling systems to generate hyper-realistic audio-visual materials. Among these innovations, deepfakes stand out as both technologically impressive and legally disruptive. While AI-driven synthetic media has legitimate applications in entertainment, education, and accessibility, its misuse raises serious concerns for privacy, data protection, and human dignity. This post argues that the creation and dissemination of deepfakes, particularly without consent, constitutes a violation of core data protection principles, especially the requirement that personal data be processed in a fair, lawful, and transparent manner under the Nigeria Data Protection Act and comparable global regimes.

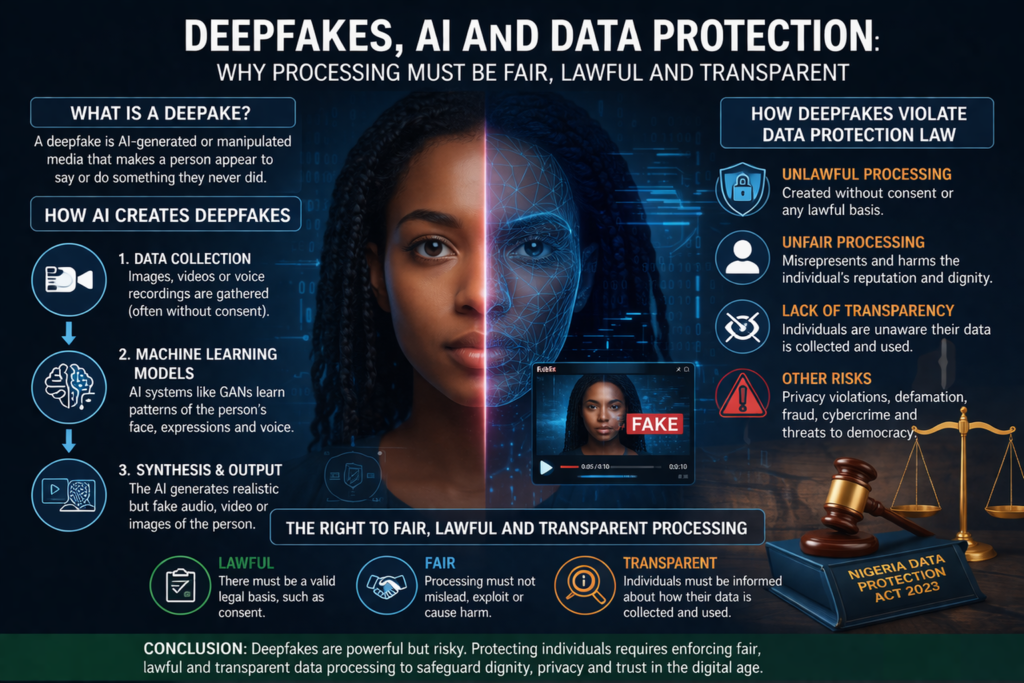

Conceptualizing Deepfakes

Deepfakes are AI-generated or AI-manipulated media that convincingly depict individuals engaging in actions or speech they never performed. The term derives from “deep learning,” a subset of machine learning, and “fake,” reflecting the synthetic nature of the output. These technologies rely on advanced computational models to replicate facial expressions, gestures, and voice patterns, often making detection difficult. As such, deepfakes challenge traditional evidentiary assumptions about the authenticity of digital media.

The Role of AI in Deepfake Production

The creation of deepfakes is fundamentally dependent on AI systems, particularly:

- Generative Adversarial Networks (GANs)

- Deep neural networks trained on large datasets

- Voice cloning and facial recognition algorithms.

The process typically involves:

- Data harvesting – scraping images, videos, or audio (often without consent)

- Model training – teaching AI to learn patterns of a person’s identity

- Synthetic generation – producing manipulated or entirely fabricated content

At each stage, personal data is processed, often including biometric identifiers such as facial geometry and voice patterns.

Deepfakes as Personal Data Processing

Under the NDPA 2023, “processing” encompasses any operation performed on personal data, including collection, storage, analysis, and dissemination. Deepfake technology clearly falls within this definition because it:

- Collects identifiable personal data

- Analyses and transforms that data

- Generates outputs that remain linked to identifiable individuals

Where facial or voice data is used, this may qualify as sensitive personal data, attracting stricter legal safeguards.

Violation of the Principle of Lawful Processing

A central requirement of data protection law is that processing must be grounded in a lawful basis, such as consent, contractual necessity, or legitimate interest.

Deepfakes frequently fail this requirement because:

- Data subjects are unaware their data is being used

- Consent is neither sought nor obtained

- The purpose (e.g., deception, exploitation) is illegitimate

Accordingly, most non-consensual deepfakes constitute prima facie unlawful processing under the NDPA.

The Fairness Requirement and the Protection of Human Dignity

Fairness demands that personal data be used in ways that respect the reasonable expectations and rights of individuals. Deepfakes inherently violate fairness because they:

- Misrepresent identity and distort reality

- Expose individuals to reputational harm, harassment, or exploitation

- Undermine personal autonomy and dignity

For instance, deepfake pornography or political manipulation exemplifies extreme unfairness, as individuals are involuntarily inserted into harmful narratives.

Transparency and the Problem of Invisible Processing

Transparency requires that data subjects be informed about how their data is collected and used. Deepfake systems typically operate in secrecy:

- Data is scraped from publicly available platforms without notice

- Individuals are not informed of downstream uses

- Audiences are often deceived into believing the content is authentic

This opacity directly contravenes the transparency obligations under the NDPA and global standards such as the General Data Protection Regulation.

Additional Legal Implications

Privacy Violations

Deepfakes interfere with the constitutional right to privacy by appropriating identity without consent.

Defamation

False representations may lower a person’s reputation in the eyes of society.

Cybercrime

Deepfakes are increasingly used in fraud, impersonation, and social engineering attacks, implicating Nigeria’s Cybercrimes Act.

The Nigerian Legal and Regulatory Context

Nigeria’s data protection framework, strengthened by the NDPA 2023, provides a foundation for regulating deepfakes. However, gaps remain:

- No explicit legal definition of deepfakes

- Limited enforcement capacity

- Absence of AI-specific legislation

Regulatory bodies such as the Nigeria Data Protection Commission must therefore adopt proactive strategies, including:

- Issuing guidelines on AI and synthetic media

- Classifying deepfake technologies as high-risk processing

- Enforcing stricter consent and accountability requirements